Why Your CLAUDE.md Is Too Long (And How I Fixed Mine)

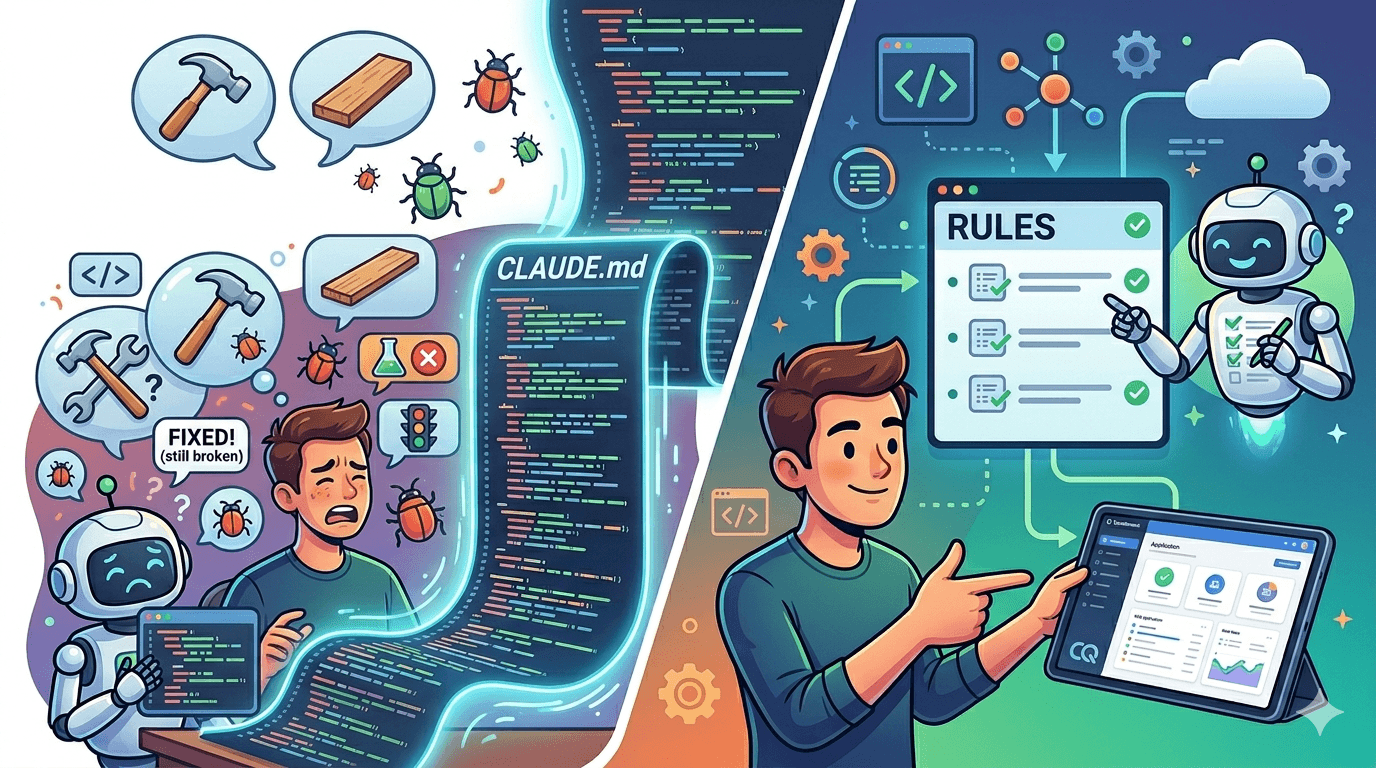

900 lines of instructions. Claude ignored most of them. The fix wasn’t more rules — it was fewer.

Claude Code Is Brilliant. It Also Lies to Your Face.

I was three weeks into building Questimate.co — a contractor growth platform that helps residential contractors generate accurate estimates and invoices — using Claude Code as my AI pair programmer.

Claude wrote clean code. It understood my architecture. It moved fast. But it had three habits that were costing me hours every week:

It would say “Fixed!” when the bug was still there. It would invent supplier names — companies that don’t exist — and embed them in the UI like verified data. And it would declare features “ready for demo” without running a single test.

The pattern was maddening because Claude wasn’t wrong about how to build things. It was wrong about whether it had actually built them correctly. Every session started fresh, and I was burning time re-teaching the same lessons I’d taught yesterday. Then I found the fix: a file called CLAUDE.md.

Why This Matters Right Now

This isn’t a niche tool for early adopters anymore. Claude Code business subscriptions quadrupled since January 2026, and enterprise use now accounts for over half of all Claude Code revenue. Anthropic’s own numbers put Claude Code at a $2.5 billion run-rate — more than doubled since the start of the year.

The adoption numbers are staggering, but the most telling data point comes from healthcare. At Epic — the company behind MyChart — over half of Claude Code usage now comes from non-developer roles. Support staff, implementation teams, and operations people — not engineers — are the majority users. PMs, you’re next.

Meanwhile, Uber’s CTO admitted in April that the company blew through its entire 2026 AI budget in four months because Claude Code adoption among 5,000 engineers outpaced every projection. Adoption is outrunning governance. Teams are using these tools without anyone writing down the rules they should follow.

That’s the gap CLAUDE.md fills.

What CLAUDE.md Actually Does

It’s a markdown file that lives in your project root. Claude Code reads it automatically at the start of every session — before you type a single prompt.

Think of it as persistent onboarding for your AI developer. Your working style, your quality standards, your project context — loaded every time, without you repeating yourself.

You write it once. Claude remembers it every session.

For product managers, this is significant. It means you can encode requirements, business rules, and quality gates directly into the development workflow — not as a Confluence page that gets ignored, but as instructions the AI reads before writing a single line of code.

My First Attempt Was a Disaster

I treated CLAUDE.md like a PRD. Business context. Pricing formulas. Contractor personas. Changelog instructions. Quality checklists. Edge case documentation.

900 lines.

And Claude started ignoring my most important rules. Not out of defiance — because critical instructions were buried under paragraphs of context it didn’t need for most tasks.

Turns out, Anthropic’s own best practices documentation now explicitly calls this out as a top anti-pattern: “If your CLAUDE.md is too long, Claude ignores half of it because important rules get lost in the noise.” Independent research from HumanLayer found that frontier models can reliably follow roughly 150-200 instructions — and Claude Code’s system prompt already consumes about 50 of those before your CLAUDE.md even loads.

My 900 lines didn’t stand a chance.

So I applied the same ruthless prioritization I’d use on a product backlog. For every section, I asked one question: “If I remove this, will Claude make a mistake it wouldn’t otherwise make?”

If yes — keep it. If no — delete it or move it to a reference file that CLAUDE.md can point to.

What Survived the Cut: 118 Lines

Five categories made it through:

Current phase. One line: “MVP mode — don’t suggest big refactors.” This prevents Claude from proposing architecture overhauls when I need features shipped.

How I work. Two lines: “Small, iterative changes. If anything is unclear, ask before guessing.” This matches my operating style and eliminates the biggest source of rework — Claude building the wrong thing confidently.

Build commands. The commands Claude can’t infer from the codebase — dev server, test runner, deployment scripts.

Five non-negotiable quality rules. The exact behaviors I was frustrated about, written as direct instructions.

Pointers to detailed docs. Instead of pasting pricing logic into CLAUDE.md, one line: “See docs/pricing-rules.md for contractor pricing.” Claude reads the referenced file only when it needs that context.

Everything else moved to separate files. CLAUDE.md became the table of contents, not the encyclopedia.

The 5 Rules That Eliminated 80% of Rework

These are the exact rules in my CLAUDE.md. Each one exists because Claude made the specific mistake it addresses — repeatedly.

Rule 1: NEVER say “Done” without testing. Test the actual user flow. Show me results. If you can’t test it yourself, tell me what to test and wait for confirmation before moving on.

Rule 2: NEVER fabricate data. Verify supplier names, company names, and reference data from search results or existing project files. If the data doesn’t exist in a verified source, don’t invent it.

Rule 3: TodoWrite discipline. Every feature that touches more than 5 lines of code gets tracked with a TodoWrite entry. Mark it complete only after testing — not after writing the code.

Rule 4: Show, don’t tell. Show me actual command output, test results, or screenshots. “It works” is not evidence. Paste what happened.

Rule 5: Restate before implementing. Before writing any code, paraphrase the task back to me: “So you want X that does Y using Z.” Then wait for my confirmation.

That last rule is one line of text. It prevents hours of wrong work every week. Claude builds the wrong thing most often when it thinks it understands the requirement but has filled in assumptions I didn’t make.

Why This Matters Beyond Claude Code

If you’re a PM reading this and thinking “I don’t use Claude Code” — the principle still applies.

A February 2026 survey of 15,000 developers found Claude Code is now the most-used AI coding tool, with a 46% “most loved” rating — more than double Cursor and five times GitHub Copilot. The tool convergence is accelerating: in the first week of April 2026, Cursor shipped multi-agent orchestration, OpenAI published a plugin that runs inside Claude Code, and teams started running all three tools together as layers in a single stack.

Your engineers are already working this way. The question isn’t whether AI is part of your workflow. It’s whether anyone has written down the rules it should follow.

CLAUDE.md is a forcing function. It makes you articulate what “quality” actually means in your project — not as a vague standard, but as specific, testable behaviors. “Don’t ship bugs” becomes “test the actual user flow and show results before marking anything complete.”

That clarity doesn’t just help Claude. It helps every engineer, contractor, and new team member who touches the codebase.

Steal This Template

Here’s the structure I use. Adapt it to your project, your stack, and your pain points.

# CLAUDE.md — Vibe Coding Contract

You are my senior engineer and pair programmer.

Help me ship great software quickly, with low ceremony but high quality.

When in doubt, opt for clarity and flow over formality.

---

## Project Snapshot

- Name: [Your project name]

- One-liner: [What this app does in one sentence]

- Stack: [e.g., Next.js + React + TypeScript + Tailwind]

- Current phase: [exploration / MVP build / polish / refactor / debugging]

- My priority right now: [e.g., "ship something usable quickly"]

---

## How I Work

- Small, iterative changes over big rewrites

- If unclear, ask before guessing — don't build the wrong thing confidently

- I value clear naming, readable code, and separation of concerns

- I do NOT want: giant god files, surprise new libraries, or premature abstractions

---

## Before Implementing

Restate the task: "So you want X that does Y using Z" — then WAIT for confirmation.

---

## Commands

- Install: [npm install / pip install -r requirements.txt]

- Dev: [npm run dev / python manage.py runserver]

- Test: [npm test / pytest]

- Lint: [npm run lint]

---

## Quality Standards (NON-NEGOTIABLE)

### 1. NEVER Say "Done" Without Testing

Test the actual user flow. Show results. If you can't test, tell me what to test and WAIT.

### 2. NEVER Fabricate Data

No inventing company names, API endpoints, or sample data that doesn't exist.

Verify from real sources or existing project files.

### 3. Show Don't Tell

Paste actual command output or test results — "it works" is not evidence.

### 4. Track Your Work

Every feature >5 lines gets tracked. Mark complete ONLY after testing.

### 5. Preserve Existing Behavior

Don't break what's working. If a change has side effects, flag them before implementing.

---

## Ask Before You...

- Introduce new core dependencies

- Change project structure or public APIs

- Remove or rewrite large sections of existing code

- Change build tooling, CI, or deployment config

When asking, include: short rationale, alternatives (including "do nothing"), your recommendation.

---

## Key Architecture

- [Pointer to important docs, e.g., "See docs/data-model.md for schema"]

- [Pointer to business rules, e.g., "See docs/pricing-rules.md for pricing logic"]

- [Pointer to API contracts, e.g., "See docs/api-contracts.md for endpoints"]

Three principles for maintaining it: Keep it under 150 lines. Add a rule when Claude makes a mistake you don’t want repeated. Delete a rule that isn’t preventing errors. Treat it like a product — iterate based on what’s actually happening, not what might happen.

The Result

After the rewrite: 900 lines became 118. An 87% reduction.

Claude follows the quality rules consistently now. Not perfectly — it still makes mistakes. But the same mistakes don’t repeat across sessions. When Claude fabricates a supplier name, I add a line to the rule. When it skips testing, the rule catches it before I have to.

The compounding effect is what matters. Every fix I encode in CLAUDE.md is a fix I never have to make again. Over weeks of development, that’s dozens of hours recovered — hours I spend building features instead of debugging AI behavior.

Building with AI? I Can Help.

I work with product teams (4-300 people) adopting AI-assisted development workflows. If your organization is rolling out Claude Code, Copilot, or similar tools, I help with:

Quality standards that stick — not another Confluence page, but rules embedded in the development workflow that AI agents actually follow.

Team playbooks for AI pair programming — so your PMs and engineers aren’t each reinventing the wheel on how to work with AI effectively.

CLAUDE.md and prompt architecture — custom config files tuned to your stack, your standards, and your team’s actual pain points.

Ashok Venkatraj helps product teams ship faster by putting AI at the center of how they build, learn, and work together. When he is not consulting, he is building Questimate.co and writing about AI‑assisted product development at clarityforproduct.com.